How to implement object classification (differentiating between a person, a vehicle, or other objects) in C#

There is a growing demand for automated public safety systems to detect unauthorized vehicle parking, intrusion, un-intended baggage, etc.. Object classification in a video is an important factor for improving the reliability of various automatic applications in video surveillance systems, as well as a fundamental feature for advanced applications, such as scene understanding or computer vision.

Object classification

Despite of extensive research, existing methods exhibit relatively moderate classification accuracy when tested on a large variety of real-world scenarios, or do not obey the real-time constraints of a video surveillance systems. A near-correct extraction of all pixels defining a moving object or the background is crucial to track and classify a moving object. In our examples the major occurrences are pedestrians and vehicles.

Why tracking and classifying an object is problematic?

There are many situations and conditions which can make object tracking harder or almost impossible, such as:

- Illumination changes and shadows

- Arbitrary camera views

- Low-resolution imagery (objects are often less than 100 pixels in height or width)

- Projective image distortion (cameras with large field of view

- Groups of people may look like cars

There are environments where not only people pass in the front of the camera (ex: in a mall or shop) which make object tracking even harder. People coming from grocery stores or other similar shops often push trolleys ahead of them or carry bikes or suitcases. These items usually appear the same size as a human being or a child.

One of the biggest problems for automated surveillance is the detection of interesting objects in visible range of the video camera. These objects can be persons, vehicles, animals or items.

Object Classification in still Image

There are various methods used for object classification in still images. These typically extract features by applying interest point detectors on the image and trying to construct the shape based upon the allocation of the points.

Object Classification in Videos

Object classification in videos has been majorly addressed with silhouette features, namely shape-based classification. This type of classification is commonly used for surveillance systems, generally, or action recognition specially.

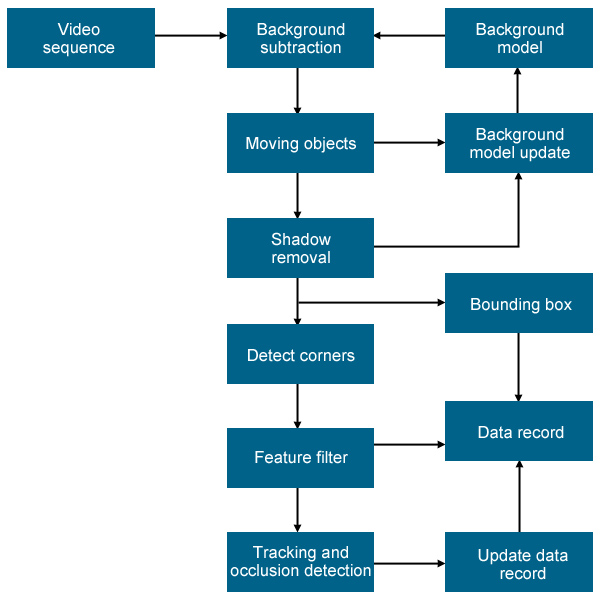

Our goal is to classify each visible moving object on the input video as a single person, a group of persons or a vehicle. The first step to achieve this goal requires the detection and correspondence of each object. The tracking algorithmm provides the bounding box, centroid and corespondent for each object over the frames. Then we attempt to classify the object by detecting repetitive changes in shape of the objects. In most cases the whole object is moving in addition to local changes in it's shape. This is the reason why we need to compensate for the translation and change in scale of the object over time to detect local changes.

Carried Object Detection

Human silhouettes are nearly symmetrical around the body axis while it is in the upright position. During walking or running, parts of arms or legs violate the symmetry. However a subregion of the silhouette has a recurring local motion while the consistently violating symmetry will usually be a carried object, e.g a bag in the hand. The symmetry axis of the silhouettes needs to be calculated for symmetry analysis.

A moving object often changes it's position and size in the bounding box. To eliminate effects of mask changes that are not due to shape change, the translation and change in scale of the object mask over time needs to be compensated. The assumption is that the only reason for changes in the shape size is the variation of the object distance from the camera. The translation is compensated by aligning the objects in the images along its centroid. For compensation of scale, the object mask is scaled in horizontal and vertical directions such that its bounding box width and height will be the same as of the first observation.

Related Pages

FAQ

Below you can find the answers for the most frequently asked questions related to this topic:

-

How can I get the URL of the camera?

You can get the URL from the producer of the camera. (In the 10th tutorial you can find information on how to create an own IP camera discoverer program.)