How to implement Blob (binary large object) tracking

This article shows you how to detect when an object enters or exits a specified region of interest. With creating a ROI on an Onvif IP Camera video stream you will able to monitor the incoming and outgoing objects in this area. This is a really usefull function if you use your camera for security purposes.

Image processing methods and object tracking

ROI

A ROI (Region Of Interest) is a selected part of - in our case - the video image which the user wants to filter or perform some other operation on. This tool gives us the oppurtinity to focus our attention to a specified part of the video image. The most common way to represent a ROI on an image is a rectangle which clearly divides the less and more important sections of the current image. With ROI you are able to scan a time or frequency interval on a waveform or the boundaries of an object on an image.

Steps of creating blobs (binary large objects)

First we must implement some Image enhancement algorithms. These algorithms represent the first step in the detection process. These are the following:

-

Background subtraction:

This is designed to separate the background from the foreground objects. We need an image, which only shows the static background. This accumulator image is substracted from the current frame so we can obtain the foreground objects. This method is only used if you are certain that the background will be the same (lightning, other properties) at all time. The image we obtained this way still contains noises, which should be eliminated in order to avoid interference with the detection.

-

Gray-scaling:

You can transform the obtained video image to grayscale because that contains far less processing data than a true color image. One-channel color images are easier to process and they also contain all necessary information.

-

Binary Thresholding (Binarization):

The next step is to transform the image into a binary one. It is the best way to transform the previously colorful pixel into black and white ones. We can reach this goal by comparing the values of these pixels to a threshold. This is an important step. Statistical studies on the videos and their frames revealed that the value of 70 would be sufficient for most images. Every pixels, which have their values under the threshold will be black and the ones being over it will be white.

-

Erosion and Dilation:

This step is used for clearing the noise from the binary image and making the blob detection easier. Both methods are iterative so these can be applied multiple times on an image. Erosion separates the blobs from each other by linking noise or some other overlap state. The Dilation method corrects the irregular pixel-based shapes. This is a necessary step because our purpose is to make the each blob contigous and avoid the black parts in it.

Blob Detection

For detecting blobs properly, you need to find all of the foreground objects and recognize them as individual ones. This is sometimes difficult because some blobs may contain holes or they can be fragmented. This occures when the Dilation is unable to recognize the object properly. Especially crowded areas are hard to analyze. Each blob has it's own position, width and height. There is an acceptable solution for the problem which is collecting the smallest and largest X and Y coordinates surrounding the blob. In this way, one can easily know the position of the blob. The limitation of this solution is that the blob's shape will be known only by a rectangular approximation of the area surrounding it. If the most important aspect of the process is the speed then you should put the blobs onto a list as they appear.

Enter and Exit events

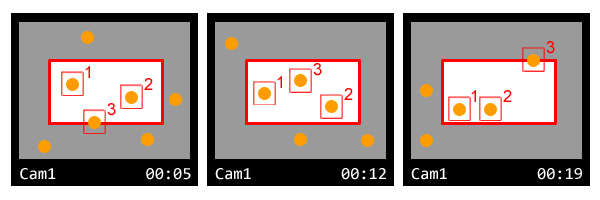

After these steps we are able to detect the blobs on the video image. Finally we need to check if there is a blob entering or exiting the specified region. (Figure 1 shows how the object (number 3) comes into the ROI, than leaves that.)

After the object enters in the ROI, they get a unique identifier one by one, which remains the same while they stay in the interested region. When an object steps in or out the ROI you are able to indicate it with an event.

Occupancy

We mentioned earlier that the fastest way to collect the blobs which are in the ROI is putting them onto a list. The size of the list clearly defines how many objects we have in the ROI at the same time. (Figure 1)

Dwell time

Thats the length of time each person spends in the ROI. After implementing the previous steps you will know when an object enters or leaves the area. All you have to do is to measure the elapsed time between the two events. This can be done with a timer. Each person (object) has a private timer measuring the time he/she spends in the ROI. So you can measure how long the object stayed in the ROI.

Related Pages

FAQ

Below you can find the answers for the most frequently asked questions related to this topic:

-

How can I get the URL of the camera?

You can get the URL from the producer of the camera. (In the 10th tutorial you can find information on how to create an own IP camera discoverer program.)